A recent special issue in Science highlights the increasingly important role that artificial intelligence (AI) plays in science and society. Providing a small but compelling sample of the types of challenges AI is equipped to tackle—from aiding chemical synthesis efforts to detecting strong gravitational lenses—the issue captures the palpable excitement about AI’s potential in a world saturated with data.

But one article in particular, “The AI detectives,” captured my attention. Rather than highlighting a specific application of AI, as the other articles do, this piece draws attention to the lack of transparency in certain machine learning algorithms, particularly neural networks. The inner workings of such algorithms remain almost entirely opaque, and they are accordingly termed “black boxes”: though they may generate accurate results, it’s still unclear how and why they make the decisions they do.

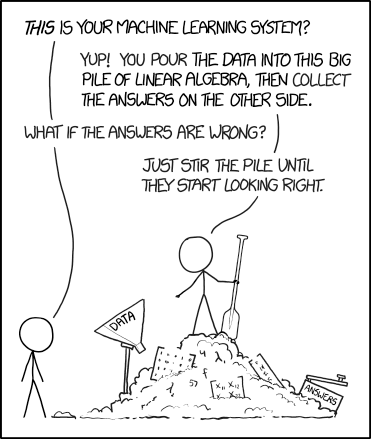

Machine Learning by XKCD is licensed under CC BY 2.5.

Researchers have recently turned their attention to this problem, seeking to understand the way these algorithms operate. “The AI detectives” introduces us to these researchers, and to their approaches to unlocking AI’s black boxes.

One such “AI detective,” Rich Caruna, is using mathematics to impose greater transparency in artificial intelligence. He and his colleagues employed a rigorous statistical approach, based on a generalized additive model, to produce a predictive model for evaluating pneumonia risk. Importantly, this model is intelligible; that is, the factors that the model weighs to make its decisions are known. Intelligibility is crucial in this setting, as previous, more opaque models conflated overall outcomes with inherent risk factors. For example, though asthmatics have a high risk for pneumonia, they typically receive immediate, effective care, which leads to better health outcomes—but which also led early models to flag them, naively, as a low risk group. Caruna et al.’s model is also modular, meaning that any faulty causal links made by the algorithm can be easily removed from its decision-making process. But while it is powerful, this approach is not well-suited to complex signals, like images—and it circumvents the problem of intelligibility in artificial intelligence, rather than addressing it head-on.

Gregoire Montavon and his colleagues, by contrast, have developed a method that uses Taylor decompositions to study the most opaque of machine learning algorithms, Deep Neural Networks. Their approach (which was not mentioned in the Science article) has the advantage of explaining the decisions made by Deep Neural Networks in easily interpretable terms. By treating each node of the neural network as a function, Taylor decompositions can be used to propagate the function value backward onto the input variables, such as pixels of an image. What results, in the case of image categorization, is an image with the output label redistributed onto input pixels—a visual map of the input pixels that contributed to the algorithm’s final decision. A fantastic step-by-step explanation of the paper can be found here.

Of course, none of these artificial intelligence techniques would be possible without mathematics. Nevertheless, it is interesting to see the role that math is now playing in furthering artificial intelligence by helping us understand how it works. And as AI is brought to bear on more and more important decisions in society, understanding its inner workings is not just a matter of academic interest: introducing transparency affords more control over the AI decision-making process and prevents bias from masquerading as logic.